Databases

DatabasesDuckDB

The in-process SQL OLAP database — SQLite-style embedding, columnar speed, and 1.5.2 ships DuckLake 1.0 GA.

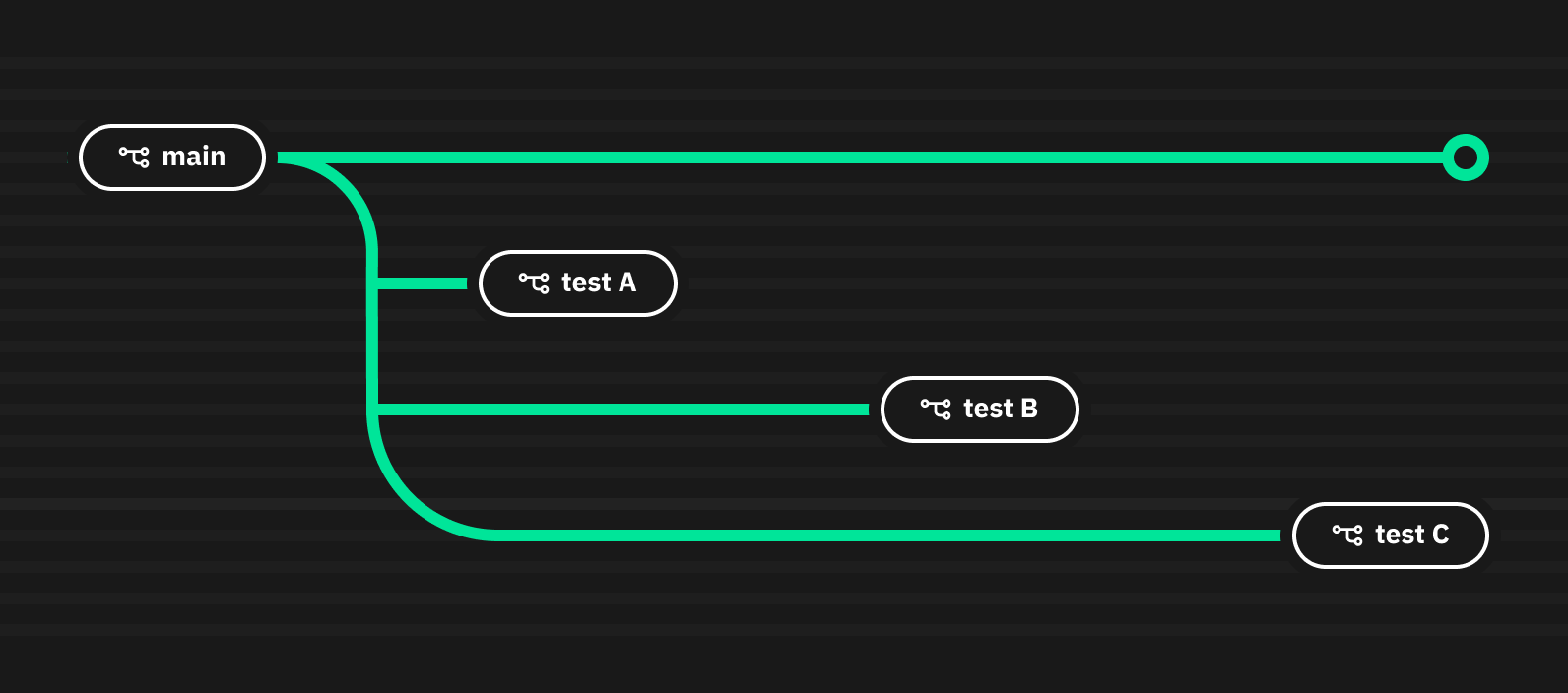

Neon is an open-source serverless Postgres platform that separates storage and compute, enabling instant database branching, true scale-to-zero, and autoscaling without any configuration. Now part of Databricks, it's the go-to serverless Postgres for modern development teams and AI-native applications.

Neon is an open-source serverless Postgres platform that rebuilds the PostgreSQL storage layer from scratch — separating compute from storage to enable instant database branching, true scale-to-zero, and on-demand autoscaling. We rate it 79/100 — an excellent choice for solo developers, startups, and AI-native application builders who want real Postgres without the overhead of managing a server, though teams running very high-throughput production workloads should proceed with some caution.

Neon was founded in by a team of core PostgreSQL contributors, with the GitHub repository first appearing on . The company's core insight was that PostgreSQL didn't need a proxy to become serverless — it needed a completely different storage layer. In , Neon was acquired by Databricks, giving it the infrastructure and enterprise credibility of one of the largest data platforms in the world. Today the product is marketed as "Serverless Postgres, by Databricks."

The GitHub repository has amassed over 21,400 stars and the codebase is primarily written in Rust, with the storage layer (called the Pageserver) handling copy-on-write data versioning that makes database branching essentially free. Unlike Amazon RDS, PlanetScale, or traditional managed Postgres providers, Neon doesn't just add an abstraction on top of a running database — the compute layer is genuinely stateless, which is why scale-to-zero is real and why branching costs nothing in terms of storage duplication.

The developer community is broadly positive about Neon's architecture and developer experience. On the Hacker News thread covering Neon's general availability, one developer called it "by far the best serverless database I've used," with widespread praise for the branching feature. Multiple developers migrating from AWS RDS reported smoother-than-expected transitions with no performance regression for typical OLTP workloads. The Databricks acquisition generated mostly positive sentiment on HN, with commenters noting the infrastructure backing now meaningfully reduces startup-risk concerns.

That said, Neon has a vocal minority of critics. Historical reliability issues — including "12 significant outages in Q4 2023" cited by one former user — still come up in threads. Pricing has been a recurring complaint, though Neon cut storage costs dramatically post-acquisition (from $1.75/GB-month to $0.35/GB-month). The serverless WebSocket driver introduces 50–100ms overhead compared to direct TCP connections, which matters for latency-sensitive applications. A pattern of frontend polling preventing scale-to-zero from activating is also frequently flagged.

Neon uses a usage-based model with a generous free tier. Pricing was significantly reduced after the Databricks acquisition in May 2025.

| Plan | Typical Monthly Cost | Key Limits |

|---|---|---|

| Free | $0/month | 100 projects, 100 CU-hrs/project, 0.5 GB storage/project, compute up to 2 CU (8 GB RAM), 60K Auth MAUs |

| Launch | ~$15/month (usage-based) | 100 projects, $0.106/CU-hr, $0.35/GB-month, up to 16 CU (64 GB RAM), 1M Auth MAUs, 7-day time travel |

| Scale | ~$701/month (usage-based) | 1,000+ projects, $0.222/CU-hr, $0.35/GB-month, up to 56 CU (224 GB RAM), 30-day time travel, SLAs, SOC2, HIPAA, private networking |

Best for: Solo developers and small-to-mid-size teams building with modern stacks — especially those deploying on Vercel, Cloudflare Workers, or other serverless platforms where connection pooling is a challenge. Neon is particularly compelling for AI-native applications (where the MCP server and REST Data API reduce friction significantly), teams with many ephemeral environments (the branching model is genuinely transformative for CI/CD), and projects where cost efficiency during low-traffic periods matters — scale-to-zero eliminates idle costs entirely.

Not ideal for: Applications requiring ultra-low latency on every query (the serverless driver adds overhead), organizations needing 99.99% uptime guarantees without a Scale plan, or teams already heavily invested in Aurora PostgreSQL with complex replication setups. If you're running a database at sustained high load with no idle windows, traditional managed Postgres (RDS, Cloud SQL) may be more cost-effective at scale.

Pros:

Cons:

Supabase offers a broader feature set (auth, storage, realtime, edge functions) on top of Postgres but doesn't offer the same storage/compute separation or instant branching. It's the better choice if you want a full BaaS. PlanetScale runs on MySQL rather than Postgres and recently killed its free tier — less compelling for the open-source crowd in 2026. Turso is built on SQLite (libSQL) and is faster for edge deployments with tiny databases, but lacks the relational power and ecosystem of Postgres.

For the target audience — developers building serverless apps, AI projects, or teams who want clean per-environment database branching in their CI/CD — Neon is the best option available in 2026. The architecture is genuinely innovative rather than a bolt-on, the free tier is real and usable, and the Databricks acquisition has addressed the biggest existential concern. We deduct points for the serverless driver latency, historical reliability issues, and the steep scale plan pricing at high compute. Rating: 79/100.

ServiceNow and Accenture Launch Forward Deployed Engineering Program to Scale Agentic AI in the Enterprise (May 6, 2026)

At Knowledge 2026, ServiceNow and Accenture announced a joint forward deployed engineering program that drops co-located engineer pods into customer environments to ship agentic AI workflows natively on the ServiceNow AI Platform — with access to 300+ pre-built agent skills and the AI Control Tower as the governance backbone.

May 7, 2026

ReFiBuy Raises $13.6M Seed to Help Brands Get Recommended by AI Shopping Agents (May 5, 2026)

ReFiBuy, the Raleigh-based agentic commerce platform from ChannelAdvisor founder Scot Wingo, closed an oversubscribed $13.6M seed led by NewRoad Capital Partners on May 5, 2026 — betting that the next billion-dollar e-commerce moat is being chosen by ChatGPT, Claude and Perplexity.

May 7, 2026

OpenAI Replaces ChatGPT's Default Model With GPT-5.5 Instant — 52.5% Fewer Hallucinations, 30% Shorter Answers (May 5, 2026)

OpenAI on May 5 swapped GPT-5.3 Instant for the new GPT-5.5 Instant as ChatGPT's default model, claiming 52.5% fewer hallucinated claims on high-stakes prompts and 30% more concise answers. The model also rolls into the API as chat-latest and adds personalization from Gmail and past chats for Plus and Pro web users.

May 7, 2026

Is this product worth it?

Built With

Compare with other tools